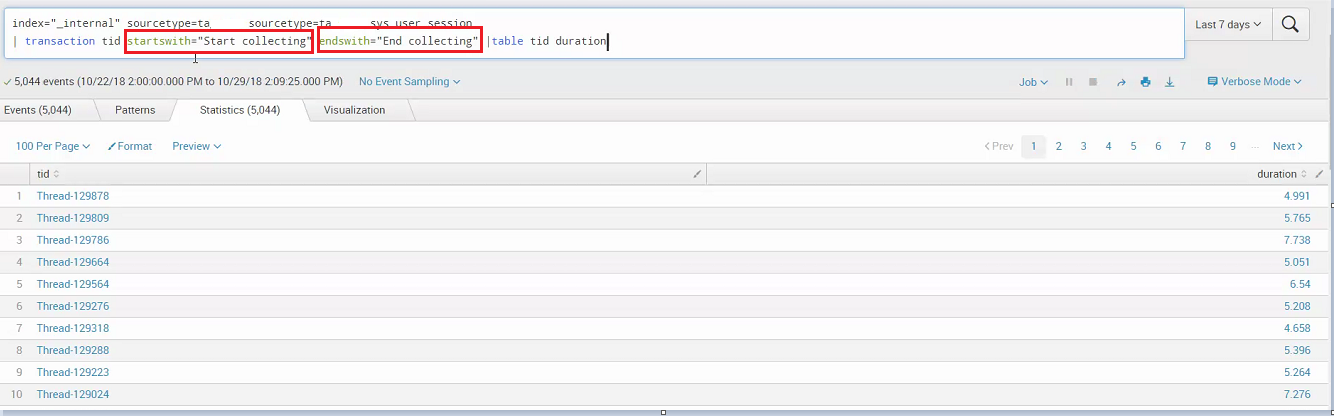

Time=2 action=assing_id, user=foo, id=123 Consider the following stream of events time=1 action=login, user=foo Given that we see events in the reverse time order, there are some pretty good chances that we see the transitive relation established after (earlier in time) it can be useful to us. If connected=false the transaction would look like event1 mid=1Ĭonnected=true means that before adding an event to a transaction the value of least one of the unifying fields must be present in at least one of the existing events in the transaction.Ĭonnected=false means that an event can be added to a transaction eventhough a transitive relation is not established between the fields already seen in the transaction and the ones present in the event When using connected=true the following trasaction will be created: (event2 is not added because at the time it is processed we don't have an established transitive relationship between mid and foo_id) event1 mid=1 Consider having these events in descending time order event1 mid=1 The Groovy Service Log Data messages will include the same content as would be included in the Groovy Service Log Data records, so it cannot be used as a complete substitute to the Groovy Service Log records.Here are two examples that explain how the connected flag affects transactions. The System Event Publisher can feed Groovy Service Log Data message to the AWS SQS endpoint to support integration with other log management systems without having to write PII data to disk. From here they can be feed into Splunk or other log management systems, regardless of the Groovy Service Logging configurations for the database. If this is configured to INFO or DEBUG level, then GroovyLogger statements at those levels will be logged to the server.log file as well. In Europe, PII is known as personal data. The reason for this default setting is to prevent PII Personally Identifiable Information (PII) is information about an individual that can be used to distinguish or trace an individual‘s identity, such as name, social security number, date and place of birth, mother‘s maiden name, or biometric records and any other information that is linked to an individual. The default logging level is ERROR, which means DEBUG, INFO and WARN messages are not logged to disk. If you want to analyze the Groovy service log records in Splunk, you need to know that the Manager's GroovyLogger class internally has a SLF/Log4J logger which writes messages to a service.log file. This solution creates significant amount of data in Splunk, as compared to Manager logs. In short, you will be getting duplicates of the early recorded log records. If the submission status changes multiple times (and it often does), all event messages to the AWS SQS will contain all submission's event and error log records up to that milestone. It means that, for instance, a submission status change event will have event logs and error logs linked to the submission. The logic importing this data to Splunk should consider that. It is important to understand that you will deal with messages as events, not raw exports of log data, so you need to distinguish what an event and log record mean in this context.ĭownside of such integration is that an event may contain redundant data, such as link to a transaction, within a set of messages (events) for the same transaction, which can dramatically increase amount of raw data to be stored on a client's side. Start using Splunk to analyze and monitor your applications.

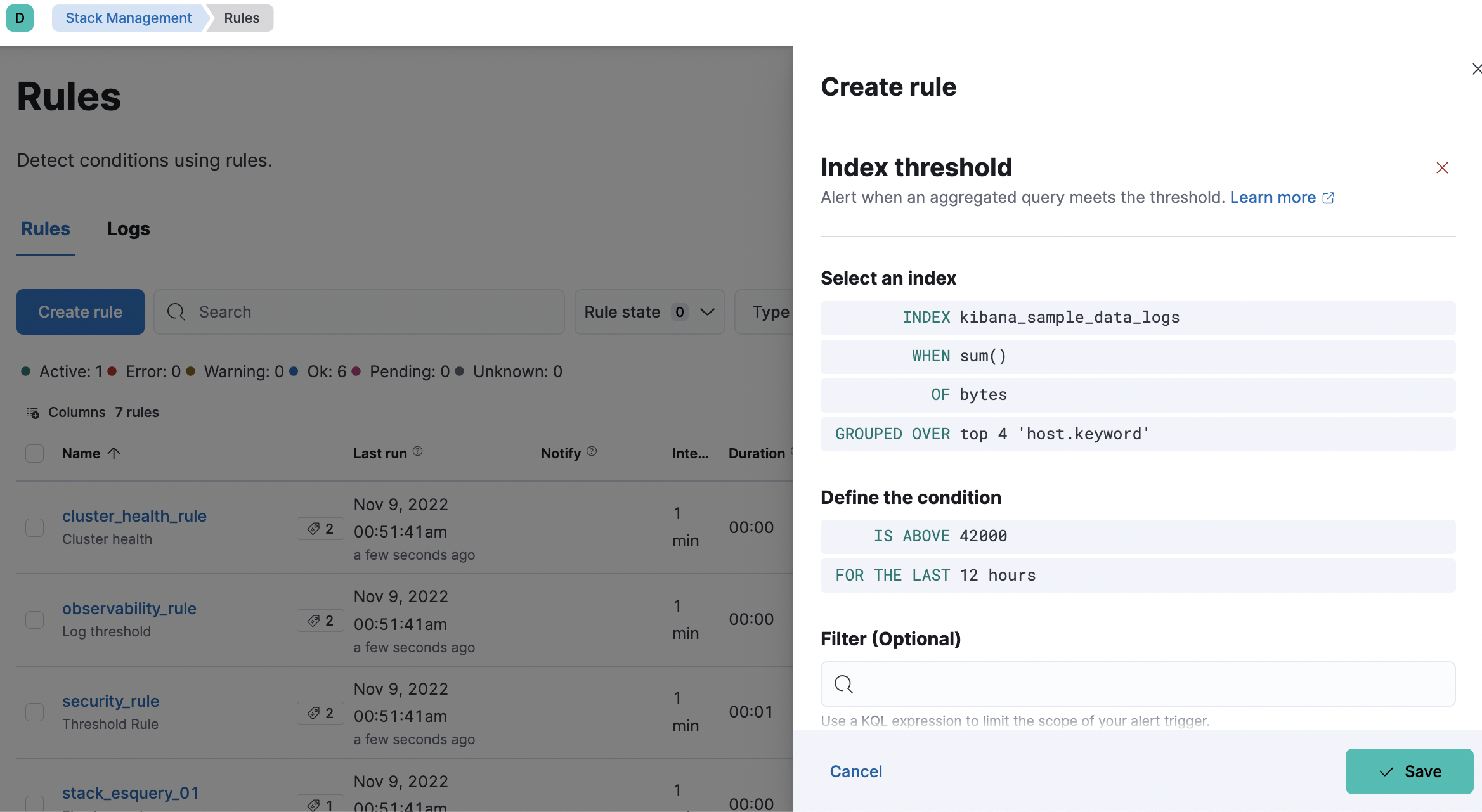

Configure Splunk AWS SQS adapter to consume (pull) the AWS SQS messages, transform and store them in the local Splunk data storage.Configure an AWS SQS adapter and configure a service connection to use the AWS SQS service.Enable and configure a system event publisher in Manager.The Splunk integration is summarized below:

Then, you integrate Splunk with the AWS SQS, so it pulls Manager log records from there. Splunk is popular software for searching, monitoring, and analyzing machine-generated data, such as log files, which you can use it to have insights into errors and perform proactive monitoring and alerts for your applications.Īlthough Manager doesn't provide a direct integration with Splunk, you can configure a System Event Publisher with an AWS SQS adapter to push log records to an AWS queue.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed